VR-Methods: Virtual Reality

Head-Mounted Display

|

In our nemolab, we apply different head-mounted displays (HMDs) to investigate the perception of movement in sports. An advantage of HMDs is that they can be used to create representative/naturalistic 3D environments. This allows us to investigate perception in highly standardized representative experimental conditions. Currently, we are equipped with an Oculus Rift, an HTC VIVE, and an HTC VIVE Pro. |

|

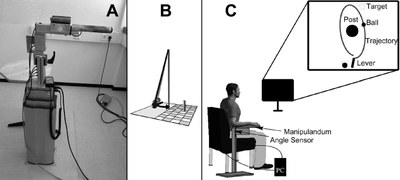

vBot

|

The vBot is a 2D robot manipulandum for investigating motor learning and control processes. It was developed by a group of researchers headed by Daniel M. Wolpert and Ian Howard. It can measure position and apply forces. Our vBot is currently at the Department of Psychology where it is being used in joint projects.

http://www.sciencedirect.com/science/article/pii/S0165027009002635 |

Skittles

| Skittles is a custom-made semivirtual throwing task that we use to examine different aspects and conditions of motor learning. The goal of the task is to manipulate a metal lever in order to accelerate and throw a virtual ball so that it hits a virtual target on a projection screen or monitor. The participant rests her forearm on the metal lever and can release the ball by removing the pressure of her index finger from an electrical contact on the tip of the lever. The position of the lever is recorded by a magnetic angle sensor with a resolution of 12 bit (0.09°) and a sampling rate of 1000 Hz. The throwing task is relatively complex and novel to participants. A further advantage is that it is possible to conduct a high number of trials, and this makes the task optimal for the design of learning studies. |  |

Multisensory integration

| In an experimental setting for investigating multisensory integration processes, three sensory channels can be generated in a virtual reality. Through the interaction of participants with a 3DOF haptic robotic device (HapticMASTER, Moog), haptic information can be generated by force fields. In addition, 3DOF visual information can be provided via a head mounted display and acoustic information via headphones. A VICON motion capturing system is integrated into the setting for online tracking of the head position and orientation required for generating the visual and acoustic sensory signals. |

|